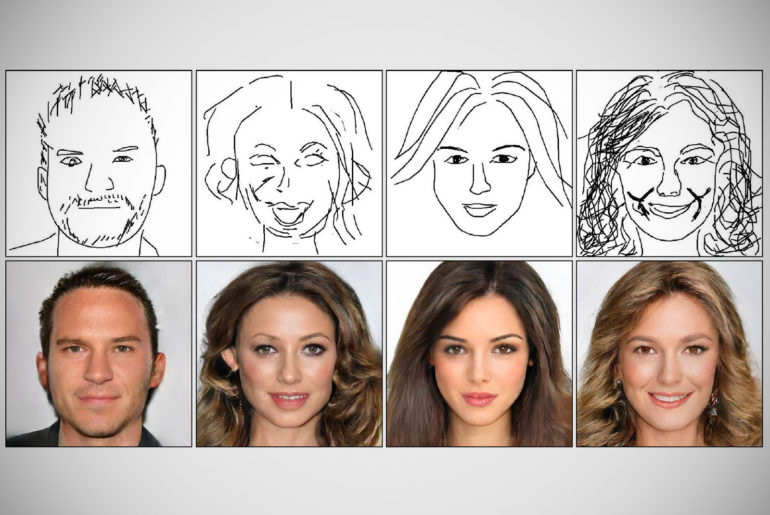

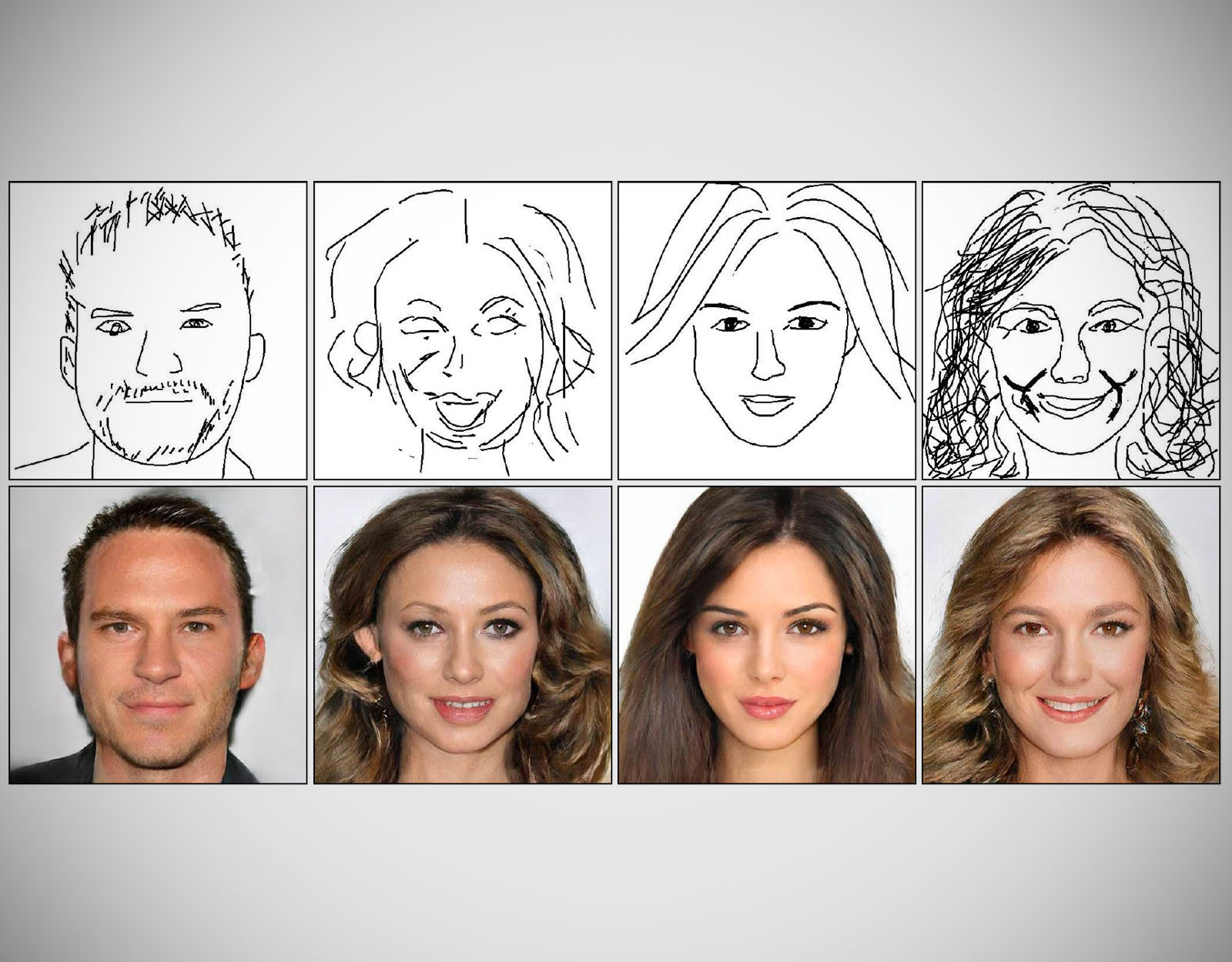

A research team from the Chinese Academy of Sciences and the City University of Hong Kong have unveiled DeepFaceDrawing, an AI-powered framework that turns sketches into photorealistic portraits. This deep learning system uses modules to generate the images, or in other words, it identifies the most notable facial features individually, like the eyes, nose, mouth, face shape, etc., before these vectors are merged to create realistic images.

There are other deep image-to-image translation techniques that may generate face images from freehand sketches faster, but they require professional sketches or even edge maps as input. DeepFaceDrawing can implicitly model the shape space of recognizable face images and then proceeds to synthesize a face image in this space to approximate an input sketch.

Sale

Acer Predator Helios 300 Gaming Laptop, Intel Core i7-9750H, GeForce GTX 1660 Ti, 15.6" Full HD 144Hz Display, 3ms Response Time, 16GB DDR4, 512GB PCIe NVMe SSD, RGB Backlit Keyboard, PH315-52-710B

- 9th Generation Intel Core i7-9750H 6-Core Processor (Upto 4. 5 gramHz) with Windows 10 Home 64 Bit

- NVIDIA GeForce GTX 1660 Ti Graphics with 6 GB of dedicated GDDR6 VRAM

- 15. 6" Full HD (1920 x 1080) Widescreen LED-backlit IPS display (144Hz Refresh Rate, 3ms Overdrive Response Time, 300nit Brightness & 72% NTSC)

Our method essentially uses input sketches as soft constraints and is thus able to produce high-quality face images even from rough and/or incomplete sketches,” said researcher Shu-Yu Chen.